Despite decades of technological progress, robots still can’t move as smoothly as humans – they drop objects, and struggle to pick them up properly. Scientists have been trying to teach robots to move with the same precision as humans, but hand movement is more complex than it might seem at first glance. Even a simple action, like holding and scrolling your phone, uses dozens of small muscles, joints, and over 100 tendons and ligaments working together.

There are a few ways to capture those movements so robots can copy them in real time, but each method has limitations.

Cameras can capture a wide range of motions pretty well – until visual obstacles get in the way. Sensor gloves can transmit detailed motion data and are not affected by any obstacles, but wearing them limits natural movement and sensation of the human hand. Another method uses sensors on the wrist or forearm to measure electrical signals from muscles to predict hand movements, but those sensors struggle to detect subtle in-between motions and can also be affected by background “noise.”

The new approach developed by MIT researchers is the most precise and reliable so far, and it utilizes ultrasound imaging. Small ultrasound stickers, about the size of a watch, paired with compact electronics, are placed on the wrist in a wristband. This setup creates clear, continuous images of the muscles and tendons as the fingers move.

Melanie Gonick

To explain how it works, Gengxi Lu, one of the researchers, uses the analogy of puppet strings.

“The tendons and muscles in your wrist are like strings pulling on puppets, which are your fingers,” he says. “So the idea is: each time you take a picture of the state of the strings, you’ll know the state of the hand.”

Each finger can move in 22 different ways, called degrees of freedom, and each of these movements appears in the ultrasound images. Researchers initially tried to match those movements with the images, but this turned out to be too complex for humans to do in real time. Instead, they trained AI to recognize patterns in the ultrasound images and predict hand movements – and it worked perfectly!

This system was tested with volunteers performing all 26 letters of American Sign Language and interacting with different objects such as a pencil, scissors, and a tennis ball. In each case, the wristband was able to accurately predict hand positions.

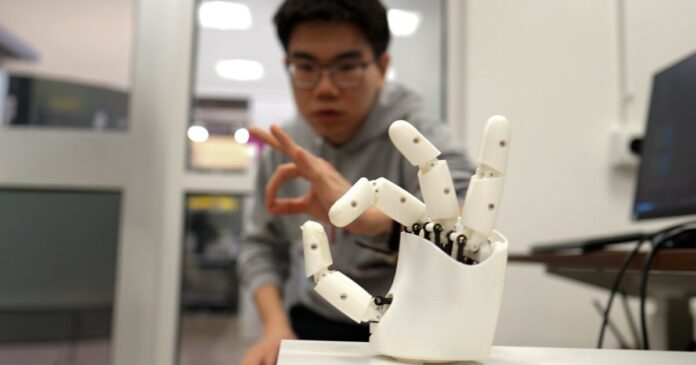

The researchers also tested the wristband as a wireless controller for a robotic hand, which could copy motions in real time – even playing a simple tune on a piano.

The ultimate goal is a smaller, wearable hand tracker that anyone can use to control robots or virtual objects wirelessly. By collecting lots of hand movement data, the AI could eventually be trained for many tasks such as controlling devices without touch, interacting with virtual reality environments, or even assisting in surgical procedures.

A paper on the research was recently published in the journal Nature Electronics.

A real-time hand tracker

Source: MIT